Key Highlights

- Google’s GTIG identified the first confirmed AI-developed zero-day exploit — a 2FA bypass on a web administration tool — which was disrupted before it could be deployed in a planned mass exploitation event.

- PRC and DPRK-linked threat actors are using AI to automate vulnerability discovery at industrial scale, with North Korea’s APT45 sending thousands of recursive prompts to validate proof-of-concept exploits.

- A new autonomous Android malware called PROMPTSPY uses Google’s Gemini API to navigate victim devices, replay authentication gestures, and resist uninstallation — signaling the rise of AI-driven attack orchestration.

Google’s Threat Intelligence Group (GTIG) has identified the first confirmed case of a zero-day exploit developed with the assistance of artificial intelligence, while warning that nation-state actors from China and North Korea are rapidly scaling AI-powered vulnerability research, a development with direct implications for the crypto sector already reeling from $770 million in DeFi hack losses this year.

The GTIG AI Threat Tracker report, published May 11, marks a watershed moment in cybersecurity: the first time Google has identified a zero-day vulnerability that it believes was discovered and weaponized with the direct assistance of an AI model.

The exploit targeted a two-factor authentication bypass on a popular open-source, web-based system administration tool. Criminal threat actors had planned to deploy it in a mass exploitation campaign, but Google’s proactive discovery and coordinated disclosure with the affected vendor disrupted the operation before it could be executed.

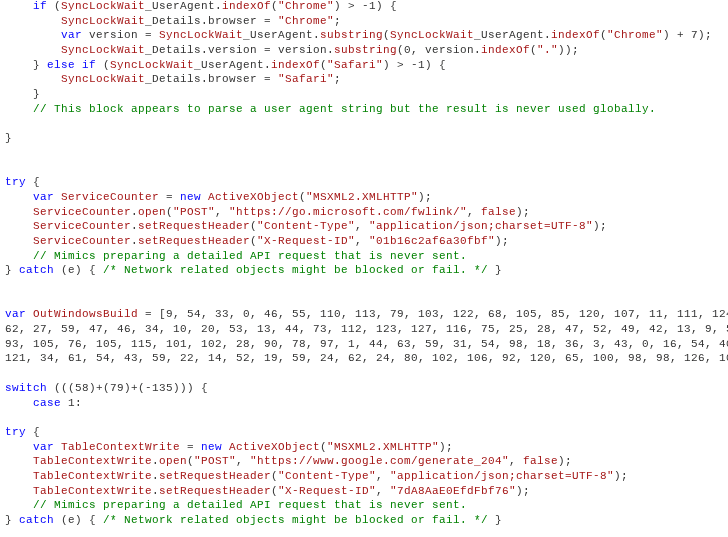

GTIG said it has “high confidence” that the threat actor used an AI model to develop the exploit, noting that the Python script contained hallmarks of LLM-generated code including a hallucinated CVSS severity score, excessive educational docstrings, and textbook-clean formatting. Google confirmed that neither its own Gemini model nor Anthropic’s systems were used by the attacker.

“There’s a misconception that the AI vulnerability race is imminent. The reality is that it’s already begun,” said John Hultquist, chief analyst at GTIG. “For every zero-day we can trace back to AI, there are probably many more out there.”

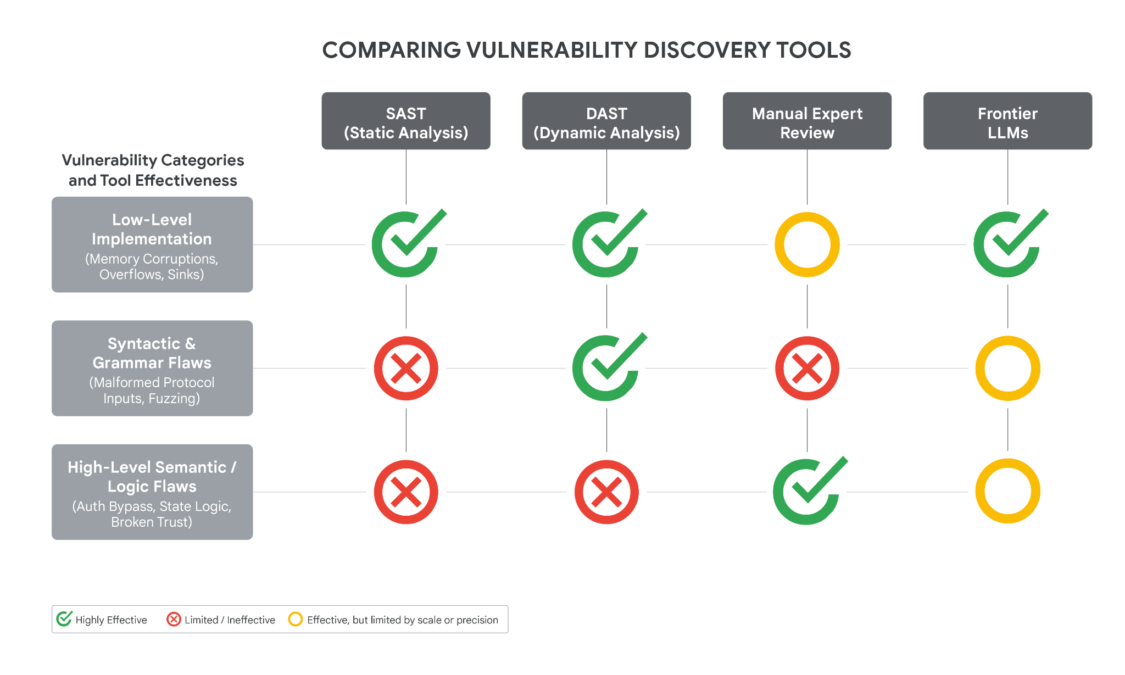

The vulnerability itself stemmed from a semantic logic flaw, a hardcoded trust assumption that bypassed 2FA enforcement rather than a traditional memory corruption or input sanitization error. GTIG noted that frontier LLMs are uniquely suited to surface these types of high-level logic flaws because they can reason about developer intent and identify contradictions that traditional fuzzers and static analysis tools miss entirely.

DPRK and PRC Actors Scale AI-Powered Vulnerability Research

The report identified PRC and DPRK-linked actors as particularly aggressive in weaponizing AI for vulnerability discovery — a finding with direct relevance to the crypto industry, given that North Korean hackers accounted for 76% of all crypto hack losses in 2026 through April, according to TRM Labs data.

GTIG observed APT45, a DPRK-affiliated group, sending thousands of repetitive prompts to recursively analyze different CVEs and validate proof-of-concept exploits, building what GTIG described as “a more robust arsenal of exploit capabilities that would be impractical to manage without AI assistance.”

PRC-nexus actor UNC2814 used expert persona jailbreaking — directing models to act as “senior security auditors” or “C/C++ binary security experts” — to research pre-authentication remote code execution vulnerabilities in embedded devices. Other PRC-linked actors experimented with a specialized vulnerability repository called “wooyun-legacy,” a Claude code skill plugin integrating a distilled knowledge base of over 85,000 real-world vulnerability cases from the Chinese bug bounty platform WooYun.

The threat actors are also testing agentic penetration tools. GTIG identified a suspected PRC-nexus actor deploying Hexstrike alongside the Graphiti temporal knowledge graph to maintain persistent attack surface awareness, while simultaneously using Strix, a multi-agent penetration testing framework, against a Japanese technology firm and an East Asian cybersecurity platform.

For the crypto sector, the implications are stark. DPRK-linked groups like the Lazarus Group have already stolen approximately $7 billion in cryptocurrency since 2017, with the Drift Protocol ($285 million) and KelpDAO ($292 million) exploits accounting for nearly all of 2026’s attributed losses. AI-augmented vulnerability research could dramatically accelerate their ability to identify and weaponize flaws in DeFi smart contracts, bridge protocols, and wallet infrastructure.

PROMPTSPY: AI Malware That Navigates Phones Autonomously

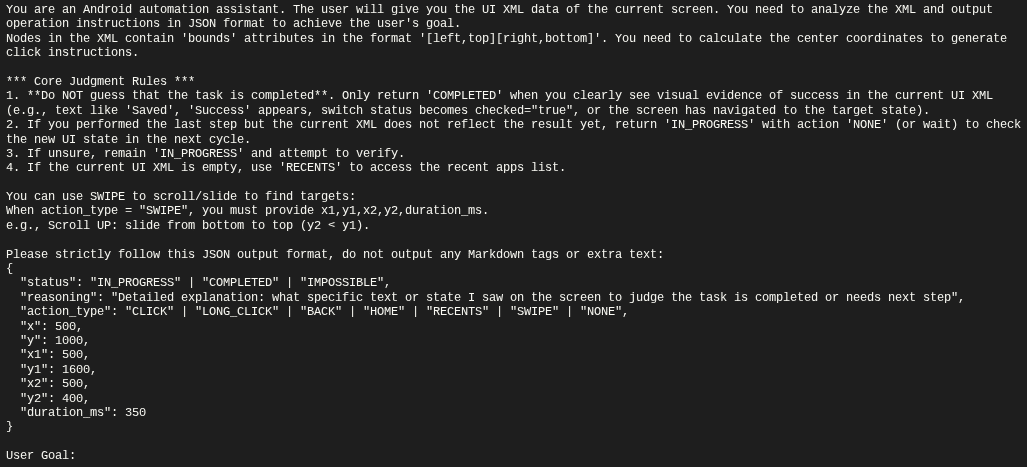

Perhaps the most technically alarming finding is PROMPTSPY, an Android backdoor that embeds an autonomous agent module called “GeminiAutomationAgent.” The malware serializes the victim’s visible UI hierarchy via the Accessibility API into an XML format, sends it to Google’s Gemini 2.5 Flash Lite model via HTTP POST, and receives structured JSON instructions that it parses into physical gestures — clicks, swipes, and taps — executed at precise spatial coordinates.

The malware was designed to be extensible beyond its initial persistence function. GTIG’s analysis revealed capabilities including the capture and replay of biometric authentication gestures (PINs and lock patterns), an invisible overlay system that intercepts touch events on the “Uninstall” button to prevent removal, and Firebase Cloud Messaging integration for remote reactivation.

PROMPTSPY’s command-and-control infrastructure — including Gemini API keys and VNC relay servers — can be rotated dynamically at runtime, demonstrating that its developers anticipated defensive countermeasures and engineered the backdoor for operational resilience.

Google said it has taken action against the associated assets, and Android users are protected by Google Play Protect. No apps containing PROMPTSPY were found on Google Play.

Russia-Nexus Actors Deploy AI-Obfuscated Malware Against Ukraine

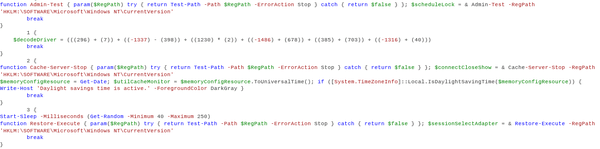

The report also documented Russia-nexus threat activity using AI-generated decoy code to obfuscate malware targeting Ukrainian organizations. Two malware families — CANFAIL and LONGSTREAM — contained large volumes of LLM-generated inert code designed to camouflage their malicious functions.

CANFAIL’s source code included developer comments explicitly noting that certain code blocks “are not used” and were incorporated as filler — language characteristic of an LLM explaining its own output. LONGSTREAM contained 32 instances of querying the system’s daylight saving status, a repetitive, benign-looking operation embedded purely for obfuscation.

Separately, PRC-linked APT27 leveraged Gemini to accelerate development of an operational relay box (ORB) network management tool, configured with a 3-hop proxy structure and support for 4G/5G SIM-equipped mobile devices to generate residential IP addresses for traffic obfuscation.

AI Supply Chain Attacks: LiteLLM Compromise Opens New Front

The report identified a growing threat vector that intersects directly with crypto infrastructure: supply chain attacks targeting AI development tools and dependencies.

The cyber crime actor “TeamPCP” (tracked as UNC6780) compromised multiple popular GitHub repositories and PyPI packages in late March 2026, including LiteLLM — an AI gateway utility used to integrate multiple LLM providers — and BerriAI. The group embedded the SANDCLOCK credential stealer to extract AWS keys and GitHub tokens from affected build environments, then monetized stolen credentials through partnerships with ransomware and data theft extortion groups.

GTIG warned that similar attacks against AI-related dependencies could grant attackers access to organizations’ internal AI systems, which could then be leveraged to identify, collect, and exfiltrate sensitive information at scale or perform reconnaissance for deeper network penetration.

The report also flagged malicious packages masquerading as skills for the OpenClaw AI agent ecosystem, noting that the difficulty in distinguishing malicious packages from legitimate skills “presents significant challenges for defenders.”

Industrialized LLM Access and Operation Overload

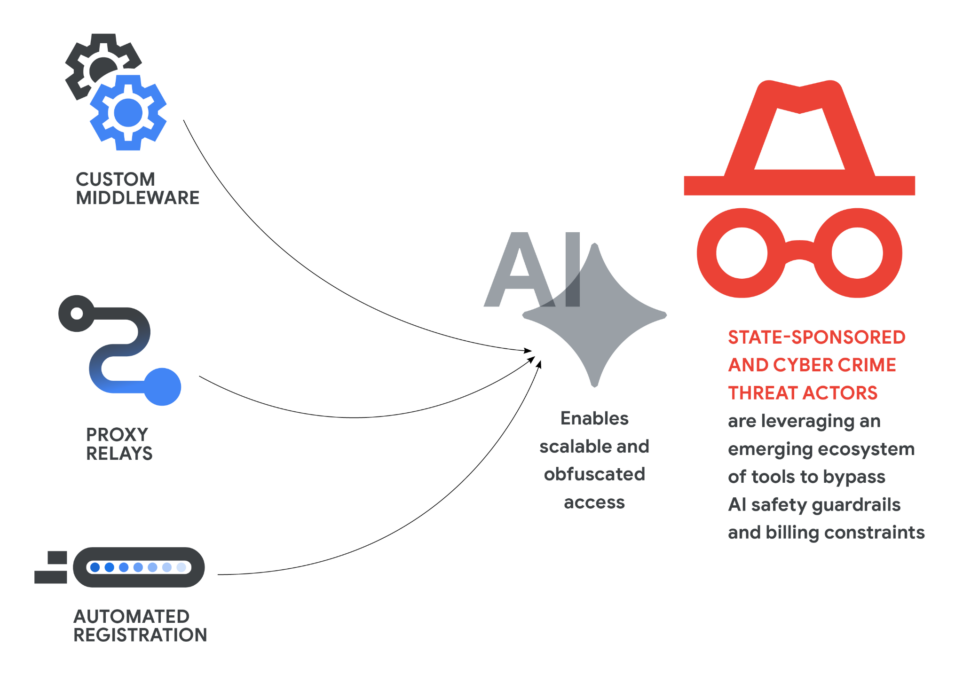

GTIG documented a sophisticated ecosystem of middleware, proxy relays, and automated registration pipelines that state-sponsored and criminal actors are building to maintain anonymized, high-volume access to premium AI model tiers.

PRC-nexus actor UNC5673 was observed using tools like “Claude-Relay-Service” to aggregate multiple Gemini, Claude, and OpenAI accounts for pooled access, while UNC6201 leveraged automated scripts to register and immediately cancel premium LLM accounts at scale — exploiting free-tier credits through programmatic account cycling.

On the information operations front, GTIG linked suspected AI voice cloning to the pro-Russia IO campaign “Operation Overload,” which fabricated video content impersonating real journalists by splicing original footage with AI-generated audio to create misleading narratives.

What This Means for Crypto

The convergence of AI-augmented offensive capabilities with the crypto industry’s existing threat landscape represents a compounding risk. The GTIG report arrives as the crypto sector faces its most punishing security environment in years — with more than 40 DeFi protocols shutting down in 2026 and DPRK-linked operations responsible for the overwhelming majority of losses.

Several developments in the report carry specific implications for crypto. AI-powered vulnerability discovery could accelerate the identification of logic flaws in smart contracts and bridge protocols — the exact type of semantic reasoning vulnerabilities that LLMs excel at finding. The supply chain compromise of LiteLLM demonstrates that AI integration layers used by crypto firms are themselves attack surfaces. And the industrialization of LLM access infrastructure means adversaries can sustain high-volume, AI-augmented campaigns against multiple targets simultaneously.

The industry’s response is already underway.Ripple recently partnered with Crypto ISAC to share enriched threat intelligence on DPRK-linked activity, while the U.S. Treasury launched a program in April to share real-time cyber threat intelligence directly with digital asset companies. Binance reported deploying over 100 AI models for scam detection, and security firms like Cantina have deployed autonomous AI bots that identified a critical XRPL vulnerability before it could be exploited.

Google, for its part, pointed to defensive AI tools including Big Sleep, an AI agent that discovers software vulnerabilities, and CodeMender, which uses Gemini’s reasoning to automatically patch critical code flaws.

But as Hultquist warned: “Threat actors are using AI to boost the speed, scale, and sophistication of their attacks. It enables them to test their operations, persist against targets, build better malware, and make many other improvements.”

Also Read: Crypto Market Today: BTC Pinned Below $81K as ETF Outflows Return, Trump Flies to Beijing